Ishaan Bose

Ishaan Bose

Enter the password to access

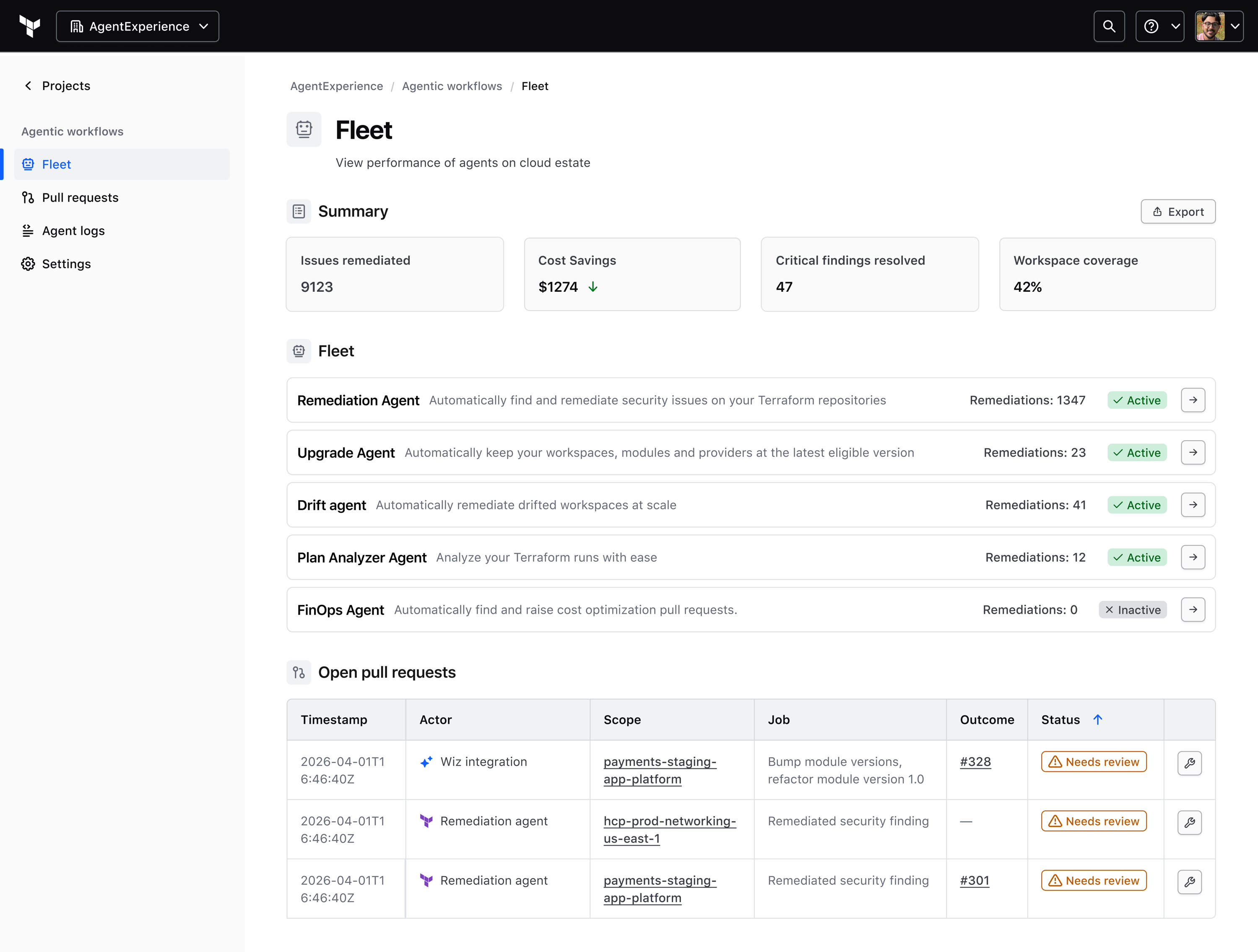

The core challenge was not a lack of detection. Security scanners, cost tools, and internal systems were already surfacing misconfigurations and issues. The gap was between finding a problem and getting a fix back into Terraform code.

Security teams spotted problems but didn't own the code. Platform teams owned standards but not every repo. App teams were closest to delivery but rarely set up for infra remediation. The result: handoffs, backlog churn, and repetitive work, such as provider bumps, module updates, control fixes, tagging,that mattered but belonged to no one.

This sat within HashiCorp's broader agentic exploration. HCP Terraform was already where infrastructure changes were planned and applied — if it could safely generate and write fixes back to source control, the same pattern could extend to security, cost, upgrades, and other maintenance work.

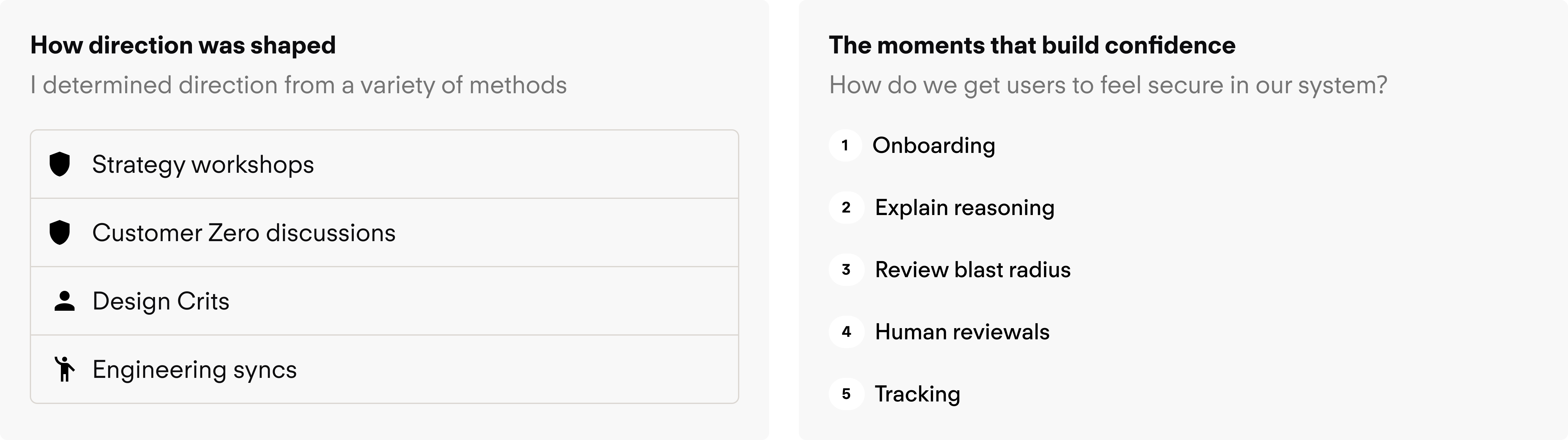

I used these recurring signals to frame the product shape and decide where to focus the first useful experience.

Detection wasn't the bottleneck — Teams already had signals from security and cost tools. What they lacked was a low-friction path from issue detection to a reviewable code change.

Toil reduction was the clearest value — Repetitive, lower-ambiguity tasks like provider upgrades and straightforward fixes were the right place to start. Not autonomous management; just getting painful maintenance off people's plates.

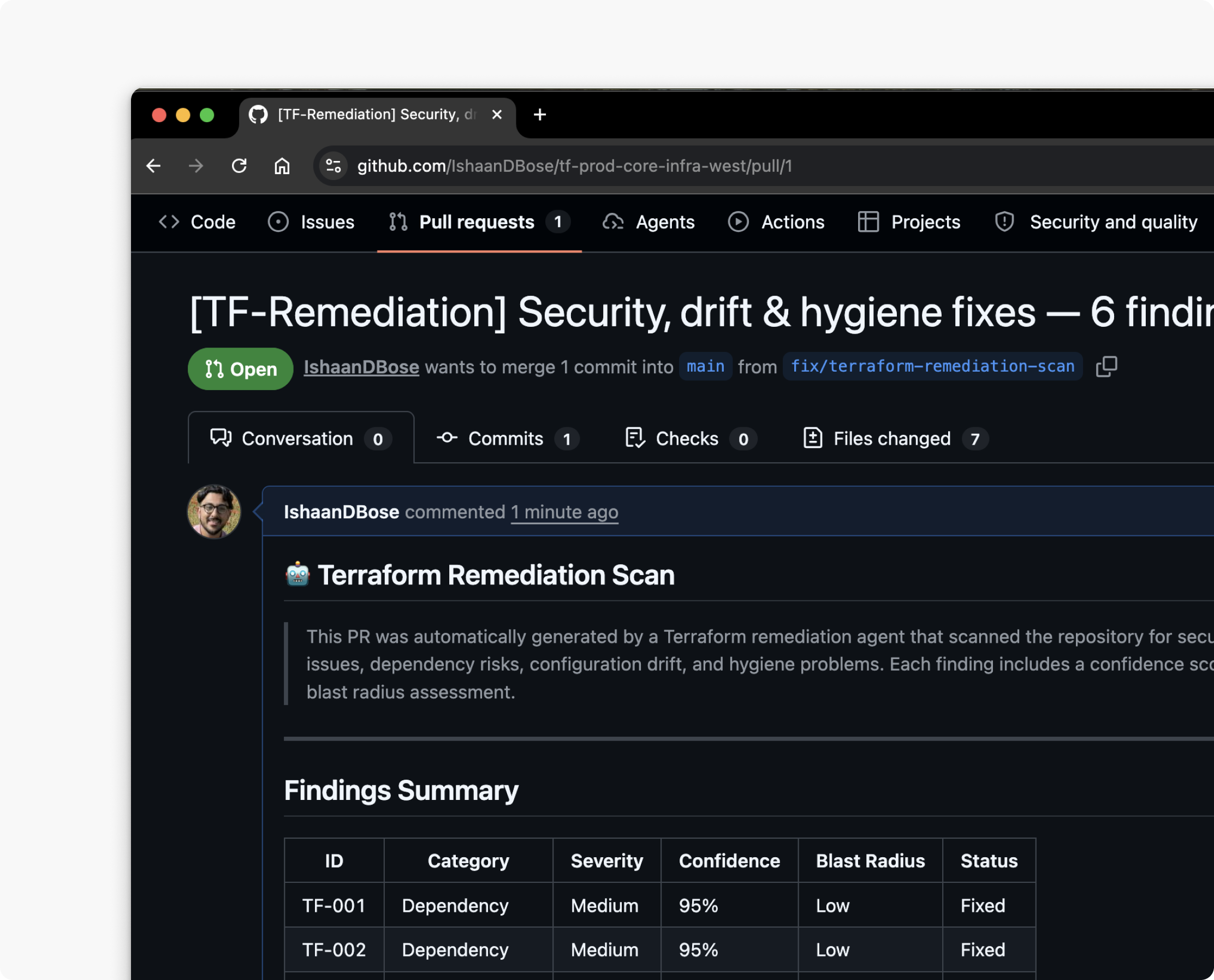

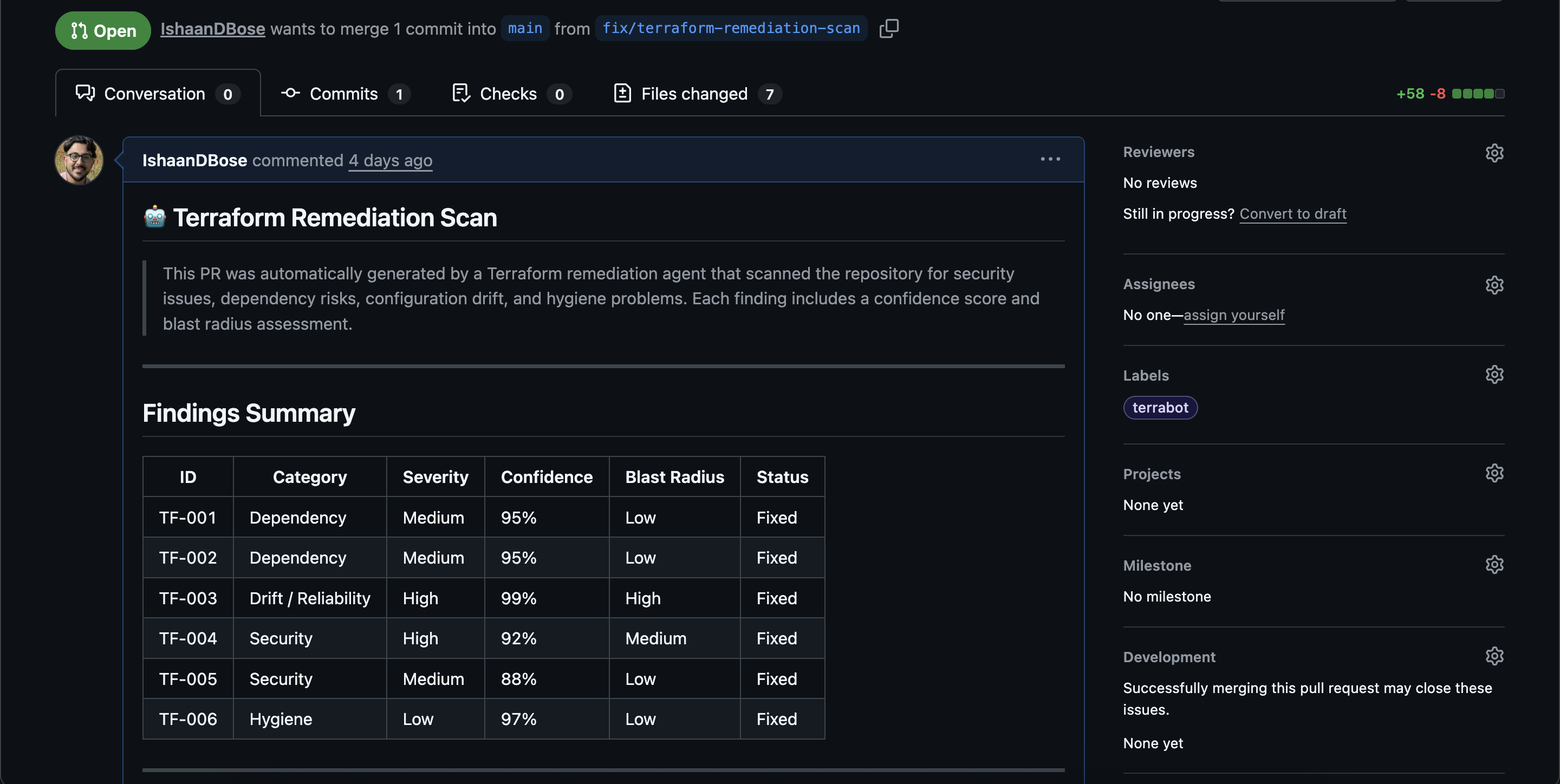

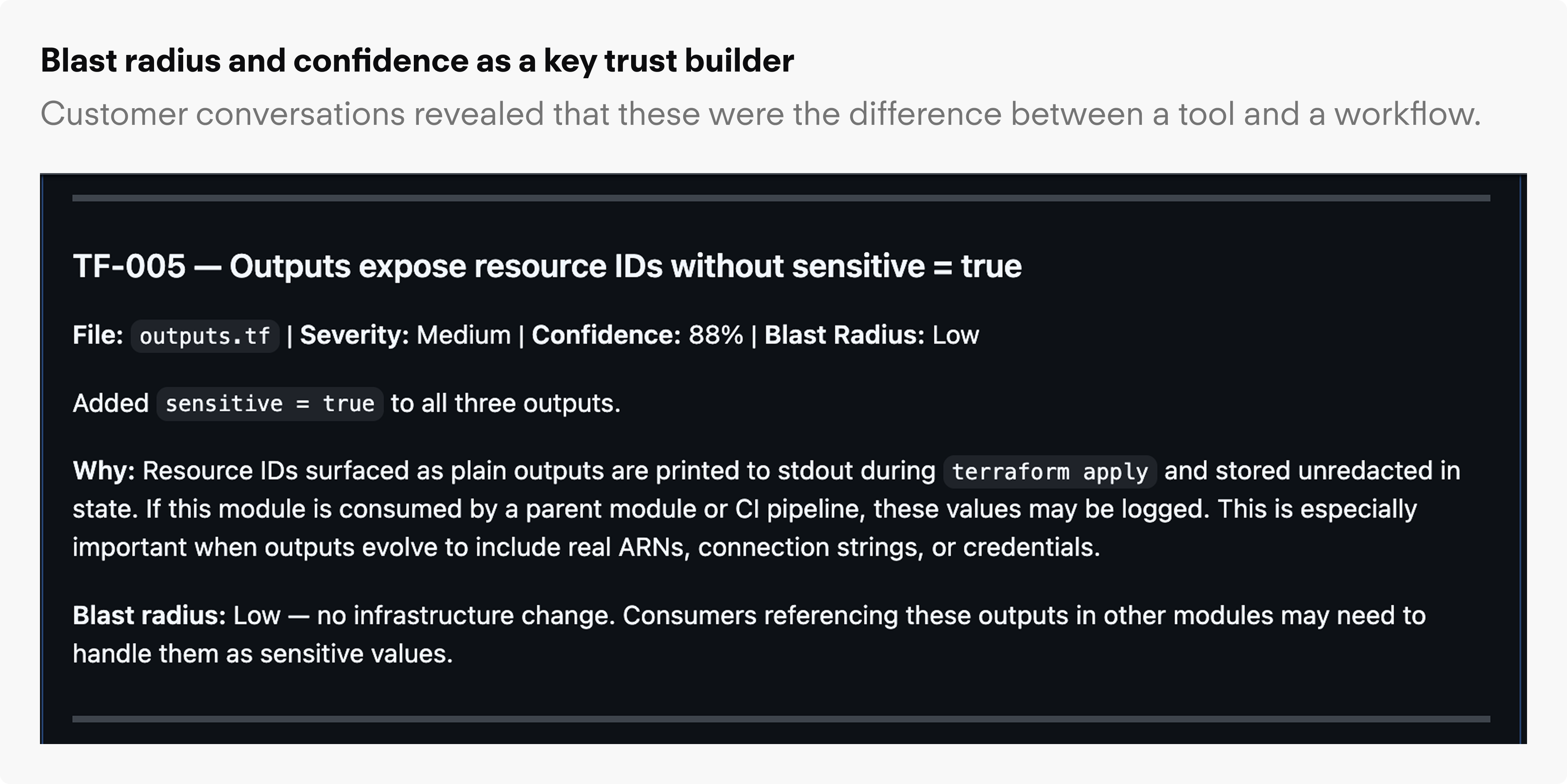

Trust depended on context, not accuracy — When people saw auto-created PRs, they wanted to know what rule triggered it, how confident the system was, what else might be affected, and whether similar changes could be grouped.

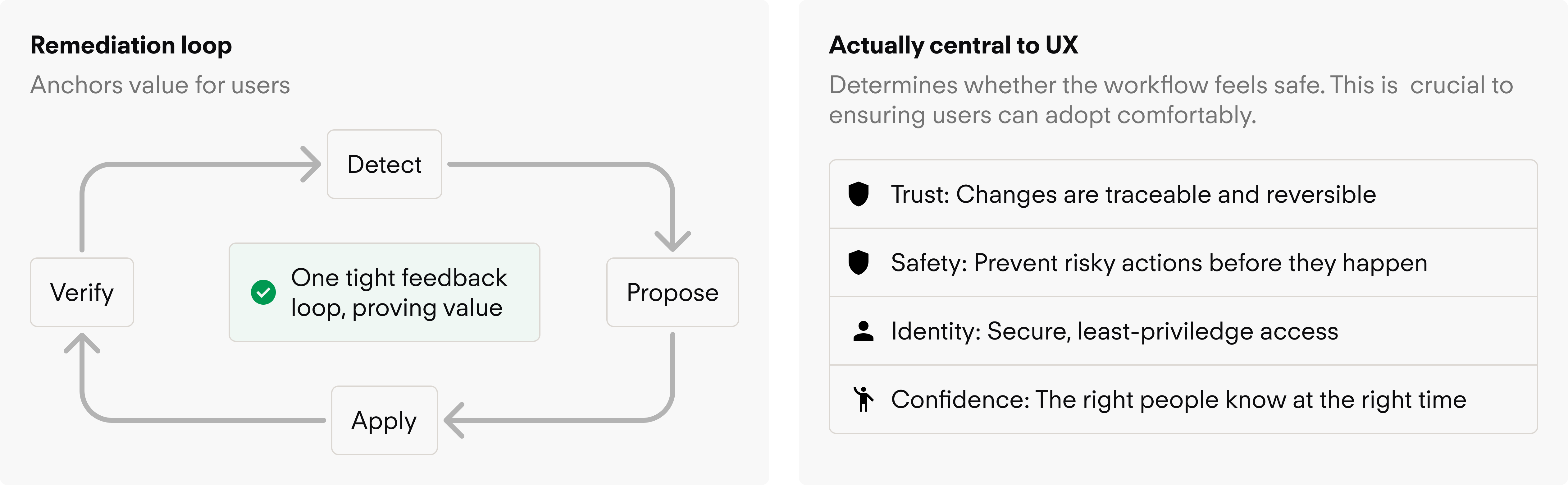

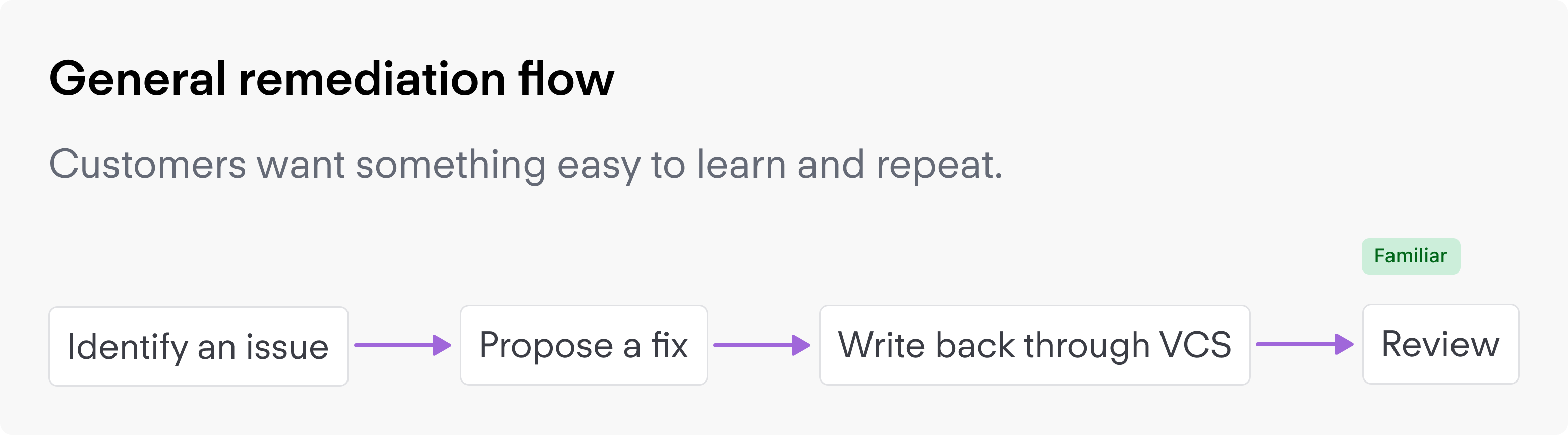

The biggest pull was toward a broader "agentic experience" connecting many tools and use cases at once. But the bigger the concept got, the harder it was to make the first workflow feel concrete and trustworthy. The team kept returning to a narrower question: what is the first remediation loop that creates real value and proves the model?

Challenge: The concept could easily drift toward an open-ended assistant that felt powerful in demos but vague in practice.

Decision: Pushed the experience toward a focused workflow.

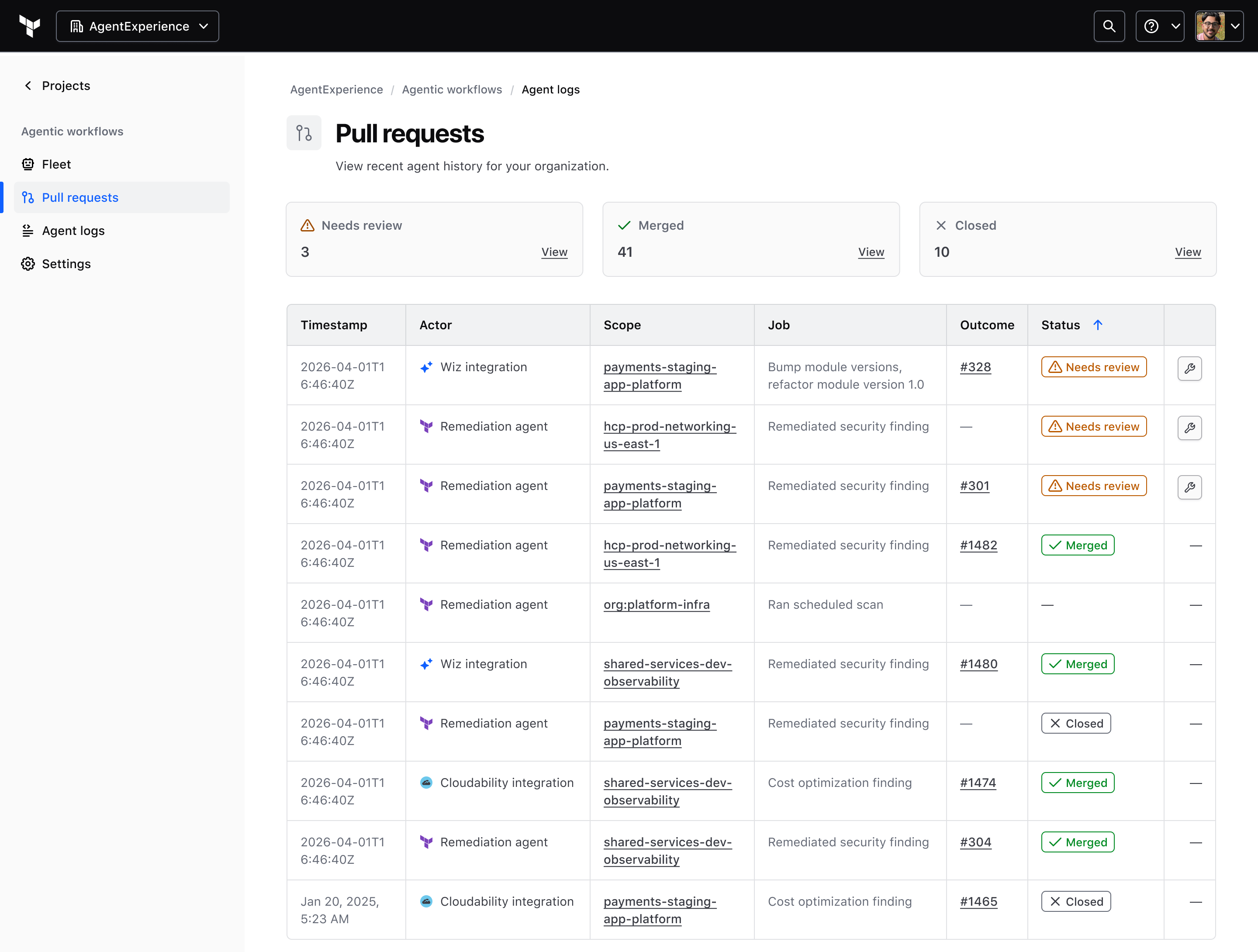

Challenge: An auto-created PR is meaningless without context. Teams needed to understand why a change existed before they could act on it.

Decision: Confidence signal, rule context, blast radius, and a history of prior PRs and actions were not extra polish; they were the product. Treated each as a required surface, not a nice-to-have.

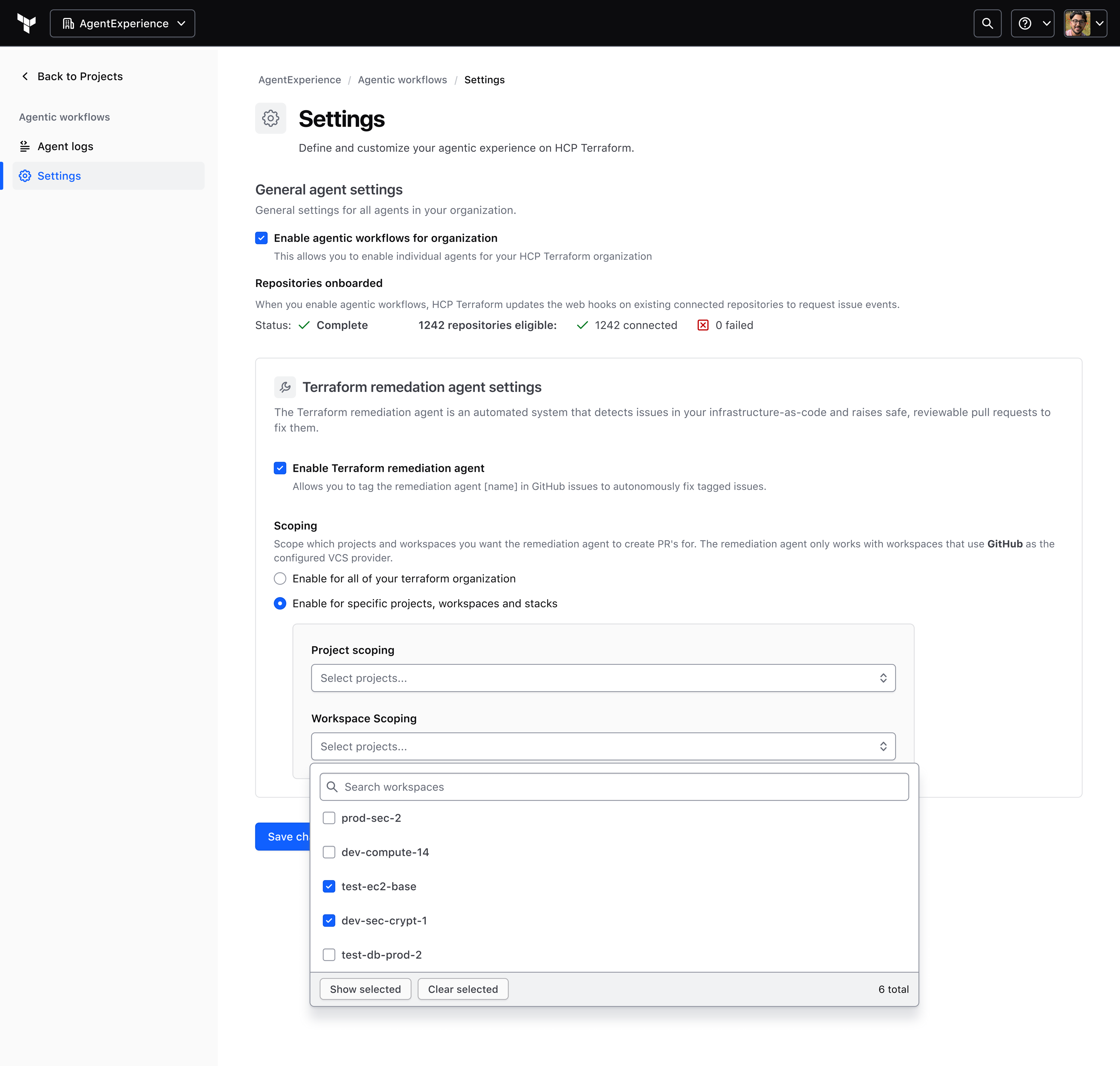

Challenge: Designing a one-off handoff from a single source would require rethinking the pattern each time a new integration was added.

Decision: Evolved toward a general write-back model (issue creation, PR history, logs, notifications, and approval states) that could support multiple remediation sources over time.

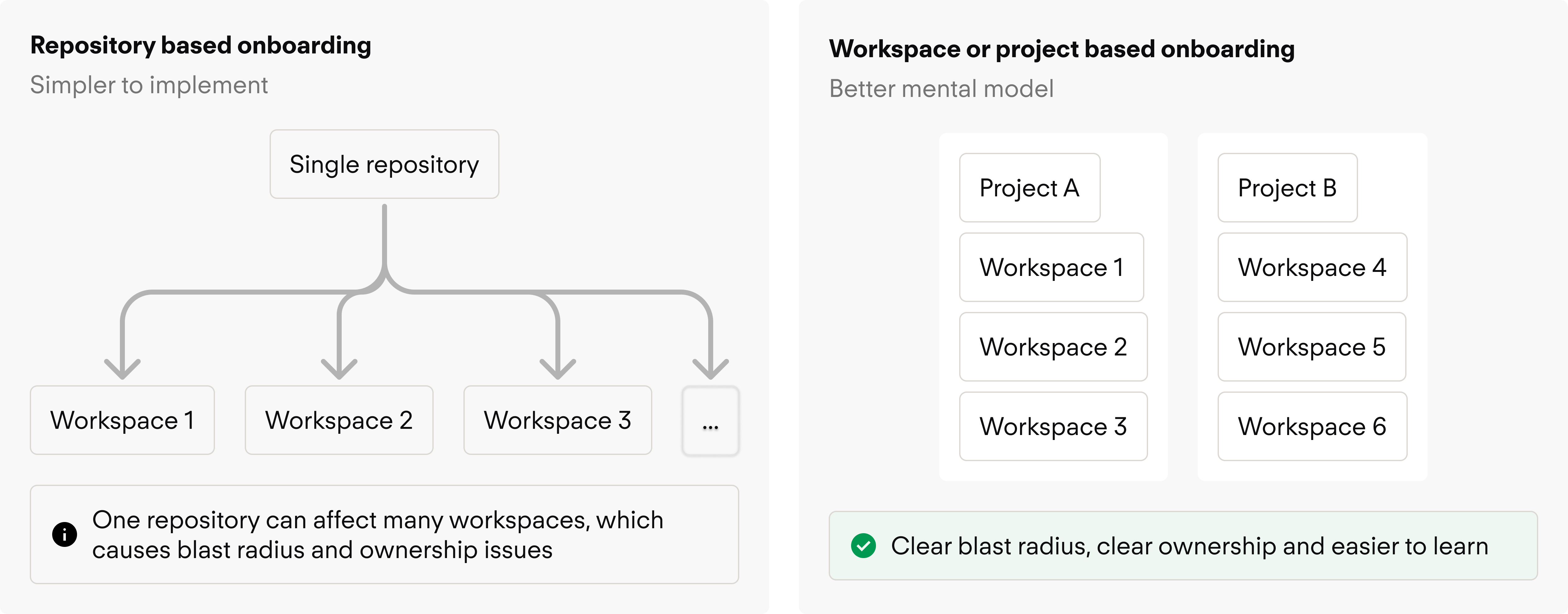

Challenge: Repo-based onboarding was technically simpler, but obscured blast radius and ownership, especially in monorepos and shared-module setups.

Decision: Steered toward workspace and project context, matching how Terraform operations are actually managed and giving users a clearer sense of what the agent was acting on.

The clearest outcome was a sharper private-beta direction. The team moved from a broad agentic concept toward a narrower remediation workflow built around proactive scanning, generated PRs, and reusable write-back patterns. The design work also made trust requirements explicit: confidence, blast radius, history, and explainability were not side features; they were part of the MVP.

The work shaped the private-beta definition, clarified where the initial experience should live, and influenced how adjacent integration and VCS workflows were framed. It also surfaced the operational requirements that needed to be solved before the experience could scale beyond an early-adopter setting.

Proactive remediation loop

From broad agentic exploration to a focused: detect issue → generate fix → open PR → human review

Trust as a product requirement

Confidence, blast radius, rule context, and history treated as core surfaces, not secondary features

Reusable write-back pattern

A general model for PR creation, issue history, and notifications that could support multiple remediation sources

This project changed how I think about AI in enterprise products. The design problem was never "how do we add an agent?" It was how to make automation feel accountable inside a messy system of repos, workspaces, permissions, and human ownership boundaries.

It pushed me to think more like a systems designer, connecting product strategy, platform constraints, and UX details that could easily get dismissed as implementation concerns. And it reinforced something I keep coming back to in enterprise work: the more powerful the automation, the more important it is to design for verification, control, and shared understanding.